AI Designer·Solo·2026

An AI chat app

you can see through.

Memory you can toggle. Influence you can witness. A knowledge base you can walk through. Local-first, zero cloud, zero telemetry.

~1 week

Solo build

4

Design surfaces

~300+

Chunks tested

~900ms

Firing pulse

0

Servers, 0 telemetry

Role

Solo designer, researcher, engineer — product strategy through frontend, backend, and ML integration.

Type

Self-initiated product build. Shipped as a cross-platform desktop app.

Stack

Electron · UMAP-JS · k-means · text-embedding-3-small · Anthropic / OpenAI APIs

Platforms

macOS · Windows · Linux — single-file Electron with zero build step for dev.

01 · Problem

Today's AI chat

is opaque by default.

Single-turn Q&A works great. But using AI as a long-term thinking partner breaks on three opacities — none of which are about model capability.

01 / MEMORY

Can't see what it knows.

Memory lists surface after the fact, flat, uncategorized. No situational toggles. "Use this tone for work, not personal" has no affordance.

02 / INFLUENCE

Can't see what mattered.

Users don't know which memories or documents shaped a reply. Output feels like luck. Users over-trust or under-trust — neither is good UX.

03 / SHAPE

Can't see what's in there.

Uploaded files become a name in a sidebar. A 300-page PDF and a one-line note look the same. The knowledge base is a black bag.

02 · Iteration

The four moves weren't a plan.

They were what survived.

Instead of defining everything upfront, I approached this as an iterative design process.

I built a minimal version, tested it in real use, identified failure points, and refined one problem at a time. After multiple iterations, four key design decisions emerged as consistently effective.

The following sections document that evolution.

ITER 01

WHERE I STARTED

Starting with a stateless conversation model

What it was: A plain chat box. Type a question, get a reply. No files, no memory, nothing saved between sessions.

Why that wasn't enough

Every time I opened it, it had forgotten everything. If I told it Monday "I'm a designer working on a music app," on Tuesday it had no idea who I was. Great for one-off questions. Useless as a thinking partner.

ITER 02

+ FILE READING

Grounding AI responses with user data

What I added: Drag in a PDF, a résumé, meeting notes — the AI would actually read them and use them when answering. I also added a little "Sources" list under each reply so you could see where an answer came from.

Why that wasn't enough

The sources were broken. I'd ask "what's my identity?" and it would cite a random SEO doc. The same file got listed three or four times for the same answer — like filler. The "Sources" label looked like evidence but was really just noise. I couldn't trust my own app.

ITER 03

+ MEMORY CARDS

Introducing persistent user context (memory)

What I added: Little notes the AI always sees — organized by type. Who I am. Projects I'm working on. Preferences. Things I don't want it to do. Flip a card off when it doesn't apply to the current conversation.

Why that wasn't enough

The cards lived inside a separate pop-up window. Out of sight, out of mind. I'd write "keep replies under 3 sentences" and then have no way of knowing whether the AI was actually using that card on any given reply, or just ignoring it. The memory existed — but I couldn't see it working.

ITER 04

+ VISUAL FEEDBACK

Making AI reasoning partially visible

What I added: When the AI replied, the cards it had actually used would flash amber for about a second, like a little heartbeat. I also built a visual map of everything in the knowledge base — dots grouped into topic-clusters you could zoom and pan around.

Why that wasn't enough

Both of these lived inside pop-up windows. To see which card flashed, I had to click the Memory button, leave the chat, watch the flash, then come back to read the reply. The information was there — the cost of looking at it was too high. So I just stopped looking.

ITER 05

WHERE I AM NOW

Integrating context and reasoning into the conversation layer

What I added: Small coloured tags directly under every AI reply, showing which memory cards it just used. Hover one to preview the content. Click it to edit the memory on the spot. For sources: only show a file if it's actually relevant to the question, and never the same file twice.

What changed

I can now glance at a reply and know why the AI said what it said — without ever leaving the chat. Sources became real evidence again instead of noise. The four design moves on the next page (Structure, Surface, Navigate, Retain) are the ones that earned their place after surviving all of this.

03 · Design framework

Structure → Surface → Navigate → Retain

MOVE 01

Structure

Turn hidden memory into a deck of typed, toggleable cards. User curates, model respects.

MOVE 02

Surface

Animate the invisible. A 900ms amber pulse shows which memories fired — without stealing attention from the answer.

MOVE 03

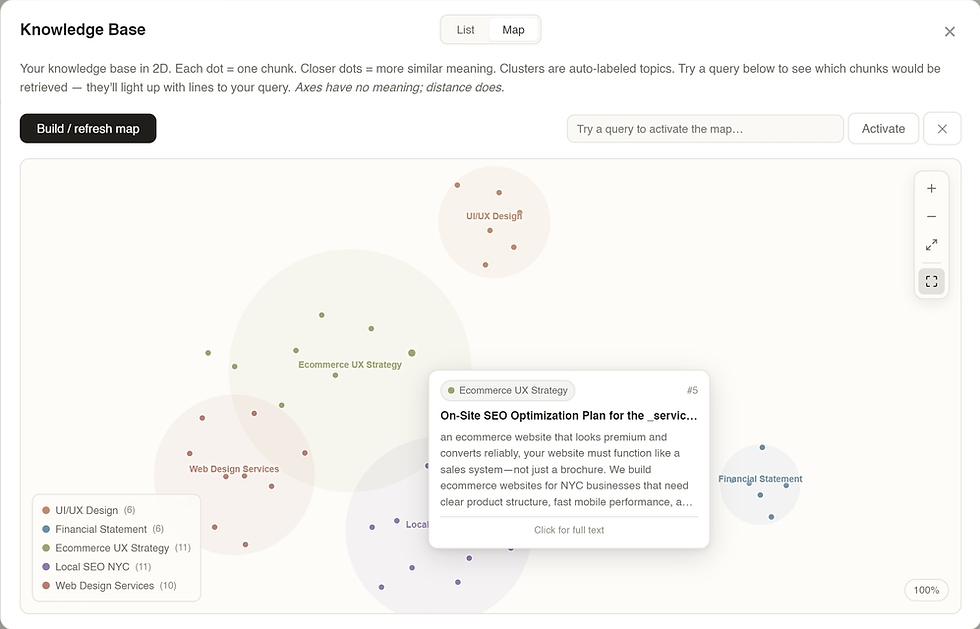

Navigate

Project embeddings to 2D. Make latent space a place users can pan, zoom, and query — not a lookup table.

MOVE 04

Retain

Store everything locally in one deletable folder. Trust through architecture, not policy copy.

MOVE 01 · STRUCTURE

Memory Cards

— a deck, not a textarea.

Six typed categories. identity · project · preference · taboo · style · general. Chosen after testing free-form tags (sprawled to 40+ in a week) and three broad buckets (too coarse — preferences and taboos behave differently at prompt-injection time). Six was the smallest set the model actually respected.

A parse-paste flow. User pastes a messy brain-dump. One LLM call turns it into cards. User reviews, edits, toggles. 30-minute setup → 30 seconds.

MOVE 02 · SURFACE

The firing pulse

— explaining AI influence.

After every reply, the app embeds the response and cosine-scores it against every enabled card. Top cards pulse amber for ~900ms. No numbers, no citation popup — just a quiet witnessable cue: these memories shaped this answer.

Killed the citation list. An earlier version listed "memories used" under each reply. Accurate but ugly — and it trained users to read the footnote instead of the answer. Ambient pulse > explicit citation when the user's primary task is reading.

MOVE 03 · NAVIGATE

The 2D semantic map

— latent space, walkable.

Every chunk: embedded (1,536-D), projected via UMAP to 2D, k-means clustered, LLM-labeled. Every dot is a chunk. Every color is a topic. RAG becomes navigation, not retrieval.

Interactions: cursor-anchored wheel zoom, click-drag pan with 5px click/drag threshold, rich hover tooltip with match-% bar, query mode that highlights top-K with connecting lines, fullscreen, keyboard shortcuts (+/−/0/F). Map first. Text second.

MOVE 04 · RETAIN

Local-first architecture

— trust via locality.

All state — every conversation, memory card, KB vector, Gmail credential, API key — lives in one 2.4 MB JSON file on the user's machine. No backend. No account. No sync. No telemetry. The only network traffic is the explicit API call the user configured.

Delete the file = complete reset. That clarity is the feature. The best trust signal is a file the user can delete.

C:\ Users \ …\ AppData \ Roaming \

local-rag-visual-map-system

└── config.json // 2.4 MB — everything you own { conversations: [...], memoryCards: [...], kb: { docs, embeddings }, kbMap: { points, clusters }, anthropicKey: "***", gmailAppPwd: "***" }

→ Delete the file to fully reset.

Animation is an explainability primitive.

Latent space rewards spatial UI.

Trust is a folder the user can delete.

Three things I learned

04 · Tradeoffs

Key design trade-offs that shaped the system

Every design decision eliminates alternatives. These are the trade-offs that materially shaped the experience.

Decision

Chose

Ruled out

Projection (Mental Model, Stability)

UMAP — maintains spatial consistency across re-embedding, preserving users’ mental map as the system evolves.

t-SNE — repositions points on each run, breaking spatial memory.

PCA — oversimplifies semantic relationships, reducing interpretability.

Memory schema (Cognitive Load, Control)

Six fixed categories — reduces cognitive overhead and forces clear decisions about what the AI should retain (e.g. preference vs. constraint).

Free-form tags — quickly fragmented into dozens of labels, reducing clarity.

Three broad buckets — too coarse to support meaningful control.

Explainability UX (Transparency, Flow)

Ambient firing pulse — surfaces system activity subtly without interrupting reading or decision-making.

Citation lists — shifted attention away from answers toward validation mechanics, increasing cognitive friction.

Data architecture (Trust, Privacy)

Local-first — ensures full user control over data, minimizes trust barriers, and reduces perceived risk.

Cloud sync — introduces account dependency, platform lock-in, and additional trust overhead (TOS, storage concerns).

Aesthetic direction (Emotion, Approachability)

Warm beige — frames the product as a thinking space rather than a technical tool, lowering intimidation and increasing approachability.

Dark “tech” UI — reinforces distance and signals a system the user doesn’t fully control.

05 · In motion

30 seconds of the thing

actually working.

Upload PDFs → chunk & embed → build vector map → query “Dallas economy” → watch top-8 relevant chunks light up while the rest fade out.

06 · In motion

A shipped desktop app, four named moves, one working trust model.

4moves

Structure, Surface, Navigate, Retain — each mapped to a shipped surface.

1,536→2D

First consumer-grade UMAP knowledge-base view I'm aware of.

~20s

From 12-PDF upload to a clustered, labeled, queryable semantic map.

100%

Local data. Zero servers. Zero telemetry. Zero account required.

07 · Methodology

How I decided

what to actually build.

Built with Claude as a coding agent via the Agent SDK — standard for 2026. The interesting part isn't the stack; it's the filters every feature had to pass before it got shipped, and the way iteration decisions got made when "working code" stopped being the bottleneck.

STRATEGY — WHAT I OPTIMIZED FOR

Three design filters.

Every feature had to pass all three.

-

Proximity over proof. Evidence signals had to live where the eye already was. Citation lists at the bottom of the chat failed this test. Inline chips directly under each reply passed. Modals failed by default, no matter how right the content was.

-

Structure over freedom. Flat memory lists scaled poorly with use. Six typed categories (IDENTITY, PREFERENCE, TABOO, STYLE, PROJECT, GENERAL) gave users a grammar to think in — the taxonomy was the prompt. Constraint was the feature.

-

Architecture over promise. "Your data is safe" is a policy line. "Delete this one file to fully reset" is a primitive. Trust had to be demonstrable by deletion, not by documentation.

-

Animation over explanation. A 900ms amber pulse communicated "this memory fired" in less attention than any citation UI I drafted. Motion as a primitive, not decoration.

METHODOLOGY — HOW I DECIDED

teration rules I operated under

when execution was no longer the constraint.

-

Friction was the signal. Every feature got a 72-hour test drive in my own workflow. If I avoided opening the app after building it, the feature failed — regardless of whether it technically worked.

-

Kill before adding. Each iteration required deleting something. Inline chips only landed after I removed the firing modal. The fix was never "more UI"; it was relocating the UI that already existed.

-

Reframe, don't polish. When retrieval cited the same source 4× and chip labels read GENERAL, I didn't iterate the component — I re-derived the shape of the data layer. Small symptoms, deep cause.

-

Decisions, not deliverables. In an agent-assisted loop, shipping is cheap and judgment becomes the bottleneck. I structured days around the next taste call (what should a disabled chip look like, should dedupe happen before or after ranking), not around building tickets.

STACK

Electron 30 · vanilla JS · umap-js for 2D projection · Claude / GPT pluggable · text-embedding-3-small · one local config.json as the entire store. No framework, no backend, no telemetry.

AGENT LOOP

Claude driving an Electron repo through the Agent SDK. Custom skills for doc generation, RAG scaffolding, and plugin packaging. My role: spec, taste, direction. Agent's role: code, glue, debug.

DURATION

~1 week of evening work · 5 iterations

Equivalent to a ~4-week build cycle, accelerated through an agent-assisted workflow.

The real shift

When a working build lands in an afternoon, the bottleneck stops being can we build it and becomes should we. That's a design bar going up, not down. The tempo of judgment is the new craft — and the methodology above is what I use to keep taste faster than code.

08 · Reflection

Three things I learned

designing for AI.

01 / ANIMATION

Animation is an explainability primitive.

Started writing citation UIs. Ended with a 900ms amber pulse. The pulse communicates more per millisecond of attention than a paragraph of explanation — because it respects that the user is trying to read the answer, not the meta-answer.

02 / SPATIAL

Latent space rewards spatial UI.

Every time I showed the map, the first reaction was spatial — "that lump over there," never "that topic." Giving a semantic space a location turns abstract AI behavior into something a body can reason about.

03 / TRUST